Zixun Huang

Senior Research Scientist

Bosch | UC Berkeley

I am an AI Research Scientist at Bosch USA working on 3D vision and neural scene representation. My research asks a simple question: how can we build representations of the physical world that are both geometrically grounded and usable for interaction and decision-making?

I focus on geometric representation learning and differentiable rendering, with an emphasis on developing models that capture consistent 3D structure and support high-fidelity simulation in complex environments.

I believe that scalable and generalizable representations come not from increasing model complexity, but from identifying the minimal structure underlying observations.

This perspective is shaped by my earlier work in robotic fabrication, where geometry, material constraints, and action are inherently coupled. Building on this, I am broadly interested in enabling scalable systems that can operate reliably and efficiently in the physical world.

Selected Publications

ICLR 2026

Top 1% ICLR Score

3DGEER: 3D Gaussian Rendering Made Exact and Efficient for Generic Cameras

Zixun Huang, Cho-Ying Wu, Yuliang Guo, Xinyu Huang, Liu Ren

Can Gaussian rendering be both projective-exact and fast without relying on lossy splatting? We present 3DGEER, a formulation for exact and efficient Gaussian rendering under generic camera models, eliminating approximation errors introduced by splatting-based methods.

CVPR 2026

Highlight (Top 3%)

No Calibration, No Depth, No Problem: Cross-Sensor View Synthesis with 3D Consistency

Cho-Ying Wu, Zixun Huang, Xinyu Huang, Liu Ren

The work synthesizes view-aligned RGB-X pairs (thermal, NIR, SAR, Normal maps ...) from either raw sensor sequences or style maps to facilitate multi-modality learning. The work proposes a match-densify-consolidate framework to work from cross-modal image matching, guided densification, and consolidation in 3DGS.

CVPR 2026

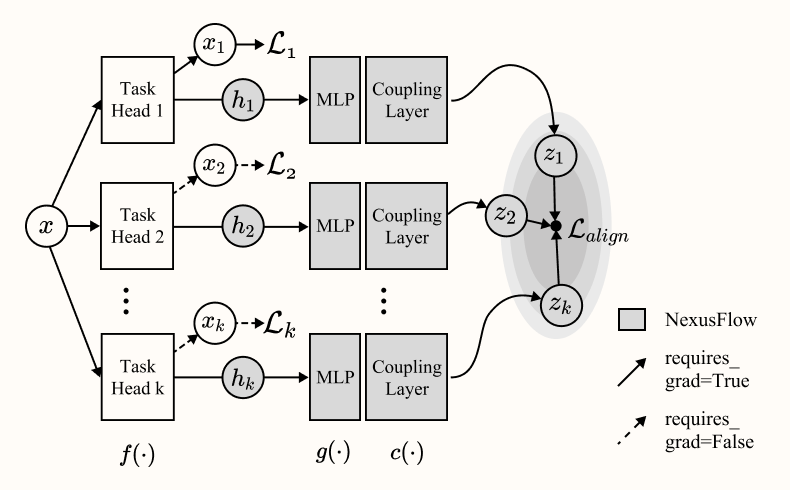

NexusFlow: Unifying Disparate Tasks under Partial Supervision via Invertible Flow Networks

Fangzhou Lin, Yuping Wang, Yuliang Guo, Zixun Huang, Xinyu Huang, Haichong Zhang, Kazunori Yamada, Zhengzhong Tu, Liu Ren, Ziming Zhang

Can we learn across multiple tasks when Task 1 data is collected in region A and Task 2 data in region B? We introduce NexusFlow, a plug-and-play framework based on invertible flow networks that is effective for Partially Supervised Multi-Task Learning (PS-MTL) and the joint learning of diverse dense and sparse prediction tasks.

CVPR Mobile AI Workshop 2025

Oral Presentation

Robust 6DoF Pose Estimation Against Depth Noise and a Comprehensive Evaluation on a Mobile Dataset

Zixun Huang*, Keling Yao*, Seth Z. Zhao, Chuanyu Pan, Allen Y. Yang

Are current 3D object tracking methods truely robust enough for low-fidelity depth sensors like the iPhone LiDAR? We introduce DTTD-Mobile, a benchmark for evaluating 6DoF pose estimation under noisy mobile depth sensing. We further propose DTTD-Net, a Fourier-enhanced RGBD fusion architecture designed to improve robustness against low-quality depth inputs.

Early Work (2018-2023)

Featured by CCTV and 10+ international media outlets

China's First All-Carbon Fiber Architectural Structure Fabricated by Robotic Manipulation

A full-scale architectural structure fabricated using robotic carbon-fiber winding. The project investigates lightweight structural systems enabled by robotic precision and material optimization. The resulting structure achieves a density of 18 kg/m³ with a load-bearing capacity of 400 kg.

Towards Reusable Robotic Formwork for Mass Customization

This line of work explores robotic fabrication techniques for reusable casting molds in curved concrete construction. The research addresses the material and economic inefficiency of single-use molds by developing hybrid clay-foam formwork systems fabricated with 6-axis robotic arms.

Cited by LBNL and ORNL

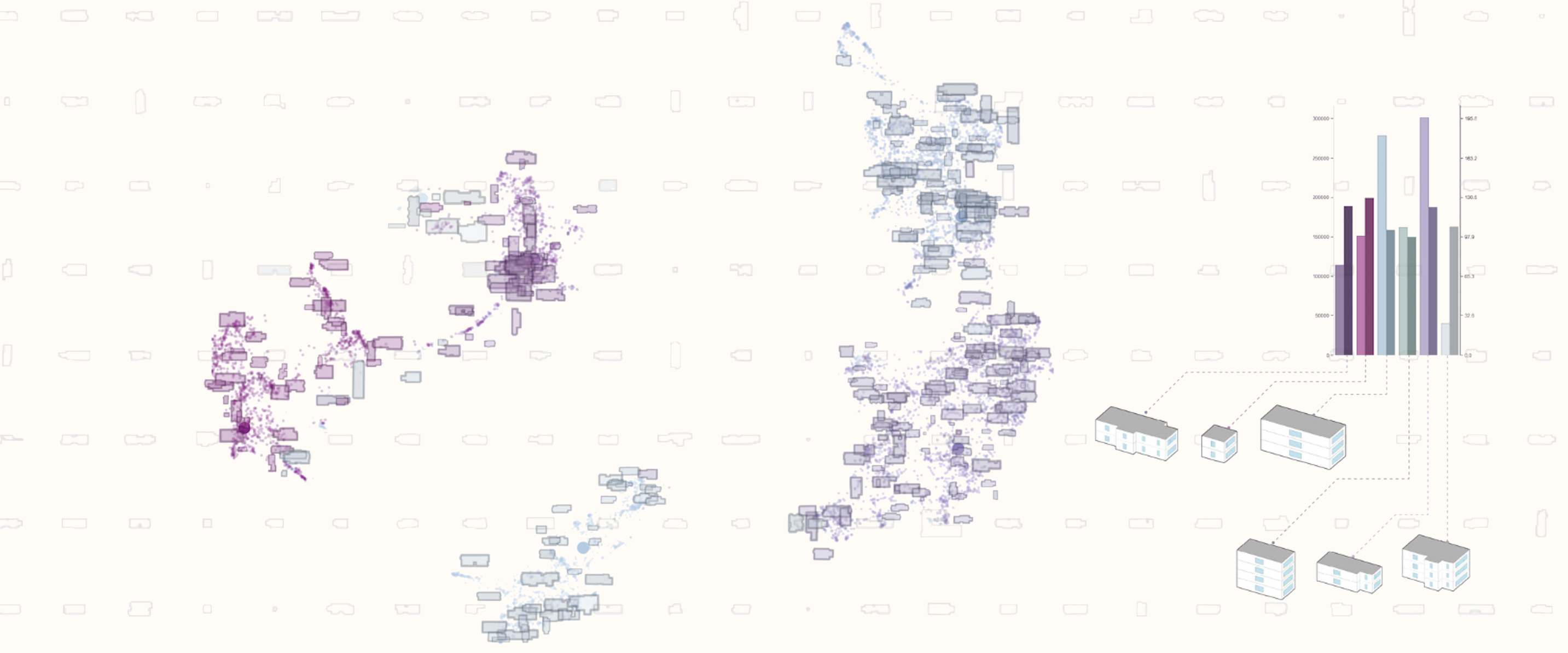

Archetype Representation Learning for Urban Energy Modeling

We develop a self-supervised framework for automatically generating localized building archetypes with detailed geometric representations for urban energy modeling.

Autonomous Discrete Construction with UAV Systems

Development of a UAV-based discrete stacking system using onboard gripping and motion control. The project demonstrates autonomous aerial assembly through integrated perception, control, and fabrication workflows.